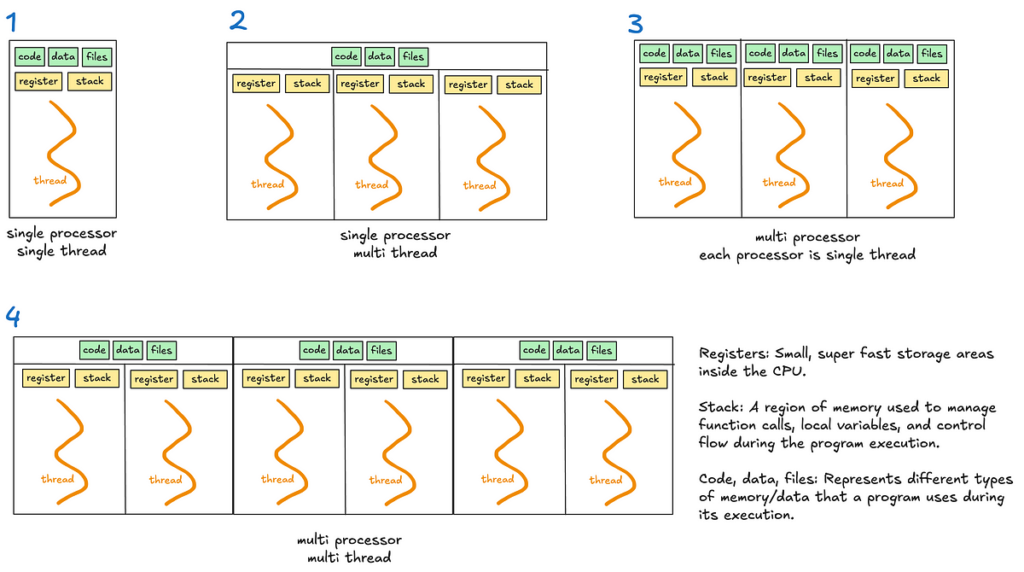

Multithreading permits a course of to execute a number of threads concurrently, with threads sharing the identical reminiscence and sources (see diagrams 2 and 4).

Nonetheless, Python’s International Interpreter Lock (GIL) limits multithreading’s effectiveness for CPU-bound duties.

Python’s International Interpreter Lock (GIL)

The GIL is a lock that permits just one thread to carry management of the Python interpreter at any time, that means just one thread can execute Python bytecode directly.

The GIL was launched to simplify reminiscence administration in Python as many inner operations, reminiscent of object creation, usually are not thread protected by default. And not using a GIL, a number of threads making an attempt to entry the shared sources would require advanced locks or synchronisation mechanisms to stop race situations and information corruption.

When is GIL a bottleneck?

- For single threaded applications, the GIL is irrelevant because the thread has unique entry to the Python interpreter.

- For multithreaded I/O-bound applications, the GIL is much less problematic as threads launch the GIL when ready for I/O operations.

- For multithreaded CPU-bound operations, the GIL turns into a big bottleneck. A number of threads competing for the GIL should take turns executing Python bytecode.

An fascinating case price noting is the usage of time.sleep, which Python successfully treats as an I/O operation. The time.sleep operate is just not CPU-bound as a result of it doesn’t contain energetic computation or the execution of Python bytecode through the sleep interval. As an alternative, the accountability of monitoring the elapsed time is delegated to the OS. Throughout this time, the thread releases the GIL, permitting different threads to run and utilise the interpreter.

Multiprocessing permits a system to run a number of processes in parallel, every with its personal reminiscence, GIL and sources. Inside every course of, there could also be a number of threads (see diagrams 3 and 4).

Multiprocessing bypasses the restrictions of the GIL. This makes it appropriate for CPU certain duties that require heavy computation.

Nonetheless, multiprocessing is extra useful resource intensive on account of separate reminiscence and course of overheads.

Not like threads or processes, asyncio makes use of a single thread to deal with a number of duties.

When writing asynchronous code with the asyncio library, you may use the async/await key phrases to handle duties.

Key ideas

- Coroutines: These are capabilities outlined with

async def. They’re the core of asyncio and symbolize duties that may be paused and resumed later. - Occasion loop: It manages the execution of duties.

- Duties: Wrappers round coroutines. If you desire a coroutine to truly begin working, you flip it right into a job — eg. utilizing

asyncio.create_task() await: Pauses execution of a coroutine, giving management again to the occasion loop.

The way it works

Asyncio runs an occasion loop that schedules duties. Duties voluntarily “pause” themselves when ready for one thing, like a community response or a file learn. Whereas the duty is paused, the occasion loop switches to a different job, making certain no time is wasted ready.

This makes asyncio very best for situations involving many small duties that spend a variety of time ready, reminiscent of dealing with hundreds of net requests or managing database queries. Since every thing runs on a single thread, asyncio avoids the overhead and complexity of thread switching.

The important thing distinction between asyncio and multithreading lies in how they deal with ready duties.

- Multithreading depends on the OS to modify between threads when one thread is ready (preemptive context switching).

When a thread is ready, the OS switches to a different thread mechanically. - Asyncio makes use of a single thread and will depend on duties to “cooperate” by pausing when they should wait (cooperative multitasking).

2 methods to jot down async code:

methodology 1: await coroutine

If you instantly await a coroutine, the execution of the present coroutine pauses on the await assertion till the awaited coroutine finishes. Duties are executed sequentially throughout the present coroutine.

Use this method if you want the results of the coroutine instantly to proceed with the subsequent steps.

Though this would possibly sound like synchronous code, it’s not. In synchronous code, your complete program would block throughout a pause.

With asyncio, solely the present coroutine pauses, whereas the remainder of this system can proceed working. This makes asyncio non-blocking on the program degree.

Instance:

The occasion loop pauses the present coroutine till fetch_data is full.

async def fetch_data():

print("Fetching information...")

await asyncio.sleep(1) # Simulate a community name

print("Information fetched")

return "information"async def important():

consequence = await fetch_data() # Present coroutine pauses right here

print(f"Consequence: {consequence}")

asyncio.run(important())

methodology 2: asyncio.create_task(coroutine)

The coroutine is scheduled to run concurrently within the background. Not like await, the present coroutine continues executing instantly with out ready for the scheduled job to complete.

The scheduled coroutine begins working as quickly because the occasion loop finds a possibility, without having to attend for an express await.

No new threads are created; as an alternative, the coroutine runs throughout the identical thread because the occasion loop, which manages when every job will get execution time.

This method permits concurrency throughout the program, permitting a number of duties to overlap their execution effectively. You’ll later must await the duty to get it’s consequence and guarantee it’s executed.

Use this method if you need to run duties concurrently and don’t want the outcomes instantly.

Instance:

When the road asyncio.create_task() is reached, the coroutine fetch_data() is scheduled to start out working instantly when the occasion loop is out there. This will occur even earlier than you explicitly await the duty. In distinction, within the first await methodology, the coroutine solely begins executing when the await assertion is reached.

Total, this makes this system extra environment friendly by overlapping the execution of a number of duties.

async def fetch_data():

# Simulate a community name

await asyncio.sleep(1)

return "information"async def important():

# Schedule fetch_data

job = asyncio.create_task(fetch_data())

# Simulate doing different work

await asyncio.sleep(5)

# Now, await job to get the consequence

consequence = await job

print(consequence)

asyncio.run(important())

Different necessary factors

- You may combine synchronous and asynchronous code.

Since synchronous code is obstructing, it may be offloaded to a separate thread utilizingasyncio.to_thread(). This makes your program successfully multithreaded.

Within the instance beneath, the asyncio occasion loop runs on the principle thread, whereas a separate background thread is used to execute thesync_task.

import asyncio

import timedef sync_task():

time.sleep(2)

return "Accomplished"

async def important():

consequence = await asyncio.to_thread(sync_task)

print(consequence)

asyncio.run(important())

- You need to offload CPU-bound duties that are computationally intensive to a separate course of.

This movement is an efficient method to determine when to make use of what.

- Multiprocessing

– Finest for CPU-bound duties that are computationally intensive.

– When you’ll want to bypass the GIL — Every course of has it’s personal Python interpreter, permitting for true parallelism. - Multithreading

– Finest for quick I/O-bound duties because the frequency of context switching is decreased and the Python interpreter sticks to a single thread for longer

– Not very best for CPU-bound duties on account of GIL. - Asyncio

– Splendid for sluggish I/O-bound duties reminiscent of lengthy community requests or database queries as a result of it effectively handles ready, making it scalable.

– Not appropriate for CPU-bound duties with out offloading work to different processes.

That’s it of us. There’s much more that this matter has to cowl however I hope I’ve launched to you the assorted ideas, and when to make use of every methodology.

Thanks for studying! I write commonly on Python, software program growth and the initiatives I construct, so give me a observe to not miss out. See you within the subsequent article 🙂