it is best to learn this

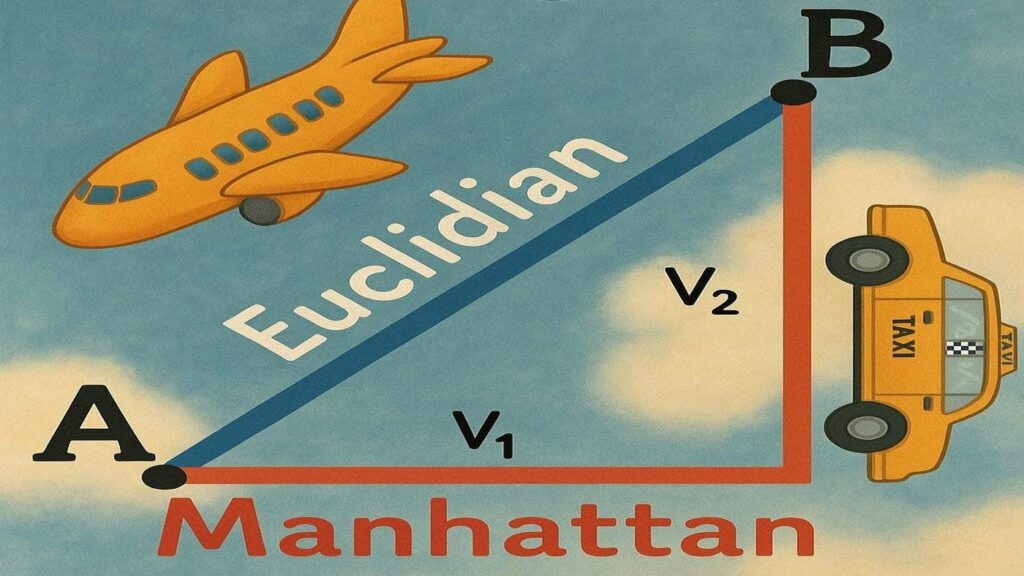

As somebody who did a Bachelors in Arithmetic I used to be first launched to L¹ and L² as a measure of Distance… now it appears to be a measure of error — the place have we gone mistaken? However jokes apart, there appears to be this false impression that L₁ and L₂ serve the identical perform — and whereas that will typically be true — every norm shapes its fashions in drastically other ways.

On this article we’ll journey from plain-old factors on a line all the best way to L∞, stopping to see why L¹ and L² matter, how they differ, and the place the L∞ norm exhibits up in AI.

Our Agenda:

- When to make use of L¹ versus L² loss

- How L¹ and L² regularization pull a mannequin towards sparsity or easy shrinkage

- Why the tiniest algebraic distinction blurs GAN photographs — or leaves them razor-sharp

- Find out how to generalize distance to Lᵖ house and what the L∞ norm represents

A Transient Observe on Mathematical Abstraction

You may need have had a dialog (maybe a complicated one) the place the time period mathematical abstraction popped up, and also you may need left that dialog feeling just a little extra confused about what mathematicians are actually doing. Abstraction refers to extracting underlying patters and properties from an idea to generalize it so it has wider software. This might sound actually difficult however check out this trivial instance:

A degree in 1-D is x = x₁; in 2-D: x = (x₁,x₂); in 3-D: x = (x₁, x₂, x₃). Now I don’t learn about you however I can’t visualize 42 dimensions, however the identical sample tells me some extent in 42 dimensions could be x = (x₁, …, x₄₂).

This might sound trivial however this idea of abstraction is essential to get to L∞, the place as an alternative of some extent we summary distance. Any more let’s work with x = (x₁, x₂, x₃, …, xₙ), in any other case recognized by its formal title: x∈ℝⁿ. And any vector is v = x — y = (x₁ — y₁, x₂ — y₂, …, xₙ — yₙ).

The “Regular” Norms: L1 and L2

The key takeaway is straightforward however highly effective: as a result of the L¹ and L² norms behave in a different way in a couple of essential methods, you possibly can mix them in a single goal to juggle two competing objectives. In regularization, the L¹ and L² phrases contained in the loss perform assist strike one of the best spot on the bias-variance spectrum, yielding a mannequin that’s each correct and generalizable. In Gans, the L¹ pixel loss is paired with adversarial loss so the generator makes photographs that (i) look real looking and (ii) match the meant output. Tiny distinctions between the 2 losses clarify why Lasso performs function choice and why swapping L¹ out for L² in a GAN typically produces blurry photographs.

L¹ vs. L² Loss — Similarities and Variations

- In case your information might comprise many outliers or heavy-tailed noise, you normally attain for L¹.

- For those who care most about general squared error and have fairly clear information, L² is okay — and simpler to optimize as a result of it’s easy.

As a result of MAE treats every error proportionally, fashions skilled with L¹ sit nearer the median remark, which is precisely why L¹ loss retains texture element in GANs, whereas MSE’s quadratic penalty nudges the mannequin towards a imply worth that appears smeared.

L¹ Regularization (Lasso)

Optimization and Regularization pull in reverse instructions: optimization tries to suit the coaching set completely, whereas regularization intentionally sacrifices just a little coaching accuracy to achieve generalization. Including an L¹ penalty 𝛼∥w∥₁ promotes sparsity — many coefficients collapse all the best way to zero. An even bigger α means harsher function pruning, easier fashions, and fewer noise from irrelevant inputs. With Lasso, you get built-in function choice as a result of the ∥w∥₁ time period actually turns small weights off, whereas L² merely shrinks them.

L2 Regularization (Ridge)

Change the regularization time period to

and you’ve got Ridge regression. Ridge shrinks weights towards zero with out normally hitting precisely zero. That daunts any single function from dominating whereas nonetheless protecting each function in play — useful if you consider all inputs matter however you need to curb overfitting.

Each Lasso and Ridge enhance generalization; with Lasso, as soon as a weight hits zero, the optimizer feels no sturdy cause to go away — it’s like standing nonetheless on flat floor — so zeros naturally “stick.” Or in additional technical phrases they simply mildew the coefficient house in a different way — Lasso’s diamond-shaped constraint set zeroes coordinates, Ridge’s spherical set merely squeezes them. Don’t fear should you didn’t perceive that, there’s numerous concept that’s past the scope of this text, but when it pursuits you this studying on Lₚ space ought to assist.

However again to level. Discover how after we practice each fashions on the identical information, Lasso removes some enter options by setting their coefficients precisely to zero.

from sklearn.datasets import make_regression

from sklearn.linear_model import Lasso, Ridge

X, y = make_regression(n_samples=100, n_features=30, n_informative=5, noise=10)

mannequin = Lasso(alpha=0.1).match(X, y)

print("Lasso nonzero coeffs:", (mannequin.coef_ != 0).sum())

mannequin = Ridge(alpha=0.1).match(X, y)

print("Ridge nonzero coeffs:", (mannequin.coef_ != 0).sum())

Discover how if we enhance α to 10 much more options are deleted. This may be fairly harmful as we may very well be eliminating informative information.

mannequin = Lasso(alpha=10).match(X, y)

print("Lasso nonzero coeffs:", (mannequin.coef_ != 0).sum())

mannequin = Ridge(alpha=10).match(X, y)

print("Ridge nonzero coeffs:", (mannequin.coef_ != 0).sum())

L¹ Loss in Generative Adversarial Networks (GANs)

GANs pit 2 networks in opposition to one another, a Generator G (the “forger”) in opposition to a Discriminator D (the “detective”). To make G produce convincing and devoted photographs, many image-to-image GANs use a hybrid loss

the place

- x — enter picture (e.g., a sketch)

- y— actual goal picture (e.g., a photograph)

- λ — stability knob between realism and constancy

Swap the pixel loss to L² and also you sq. pixel errors; giant residuals dominate the target, so G performs it protected by predicting the imply of all believable textures — end result: smoother, blurrier outputs. With L¹, each pixel error counts the identical, so G gravitates to the median texture patch and retains sharp boundaries.

Why tiny variations matter

- In regression, the kink in L¹’s by-product lets Lasso zero out weak predictors, whereas Ridge solely nudges them.

- In imaginative and prescient, the linear penalty of L¹ retains high-frequency element that L² blurs away.

- In each circumstances you possibly can mix L¹ and L² to commerce robustness, sparsity, and easy optimization — precisely the balancing act on the coronary heart of contemporary machine-learning targets.

Generalizing Distance to Lᵖ

Earlier than we attain L∞, we have to discuss in regards to the the 4 guidelines each norm should fulfill:

- Non-negativity — A distance can’t be destructive; no person says “I’m –10 m from the pool.”

- Optimistic definiteness — The space is zero solely on the zero vector, the place no displacement has occurred

- Absolute homogeneity (scalability) — Scaling a vector by α scales its size by |α|: should you double your velocity you double your distance

- Triangle inequality — A detour via y isn’t shorter than going straight from begin to end (x + y)

Initially of this text, the mathematical abstraction we carried out was fairly easy. However now, as we take a look at the next norms, you possibly can see we’re doing one thing related at a deeper stage. There’s a transparent sample: the exponent contained in the sum will increase by one every time, and the exponent outdoors the sum does too. We’re additionally checking whether or not this extra summary notion of distance nonetheless satisfies the core properties we talked about above. It does. So what we’ve accomplished is efficiently summary the idea of distance into Lᵖ house.

as a single household of distances — the Lᵖ house. Taking the restrict as p→∞ squeezes that household all the best way to the L∞ norm.

The L∞ Norm

The L∞ norm goes by many names supremum norm, max norm, uniform norm, Chebyshev norm, however they’re all characterised by the next restrict:

By generalizing our norm to p — house, in two traces of code, we will write a perform that calculates distance in any norm conceivable. Fairly helpful.

def Lp_norm(v, p):

return sum(abs(x)**p for x in v) ** (1/p)We will now consider how our measure for distance modifications as p will increase. Trying on the graphs bellow we see that our measure for distance monotonically decreases and approaches a really particular level: The most important absolute worth within the vector, represented by the dashed line in black.

In truth, it doesn’t solely strategy the most important absolute coordinate of our vector however

The max-norm exhibits up any time you want a uniform assure or worst-case management. In much less technical phrases, If no particular person coordinate can transcend a sure threshold than the L∞ norm needs to be used. If you wish to set a tough cap on each coordinate of your vector then that is additionally your go to norm.

This isn’t only a quirk of concept however one thing fairly helpful, and properly utilized in plethora of various contexts:

- Most absolute error — certain each prediction so none drifts too far.

- Max-Abs function scaling — squashes every function into [−1,1][-1,1][−1,1] with out distorting sparsity.

- Max-norm weight constraints — preserve all parameters inside an axis-aligned field.

- Adversarial robustness — prohibit every pixel perturbation to an ε-cube (an L∞ ball).

- Chebyshev distance in k-NN and grid searches — quickest method to measure “king’s-move” steps.

- Sturdy regression / Chebyshev-center portfolio issues — linear packages that decrease the worst residual.

- Equity caps — restrict the most important per-group violation, not simply the common.

- Bounding-box collision exams — wrap objects in axis-aligned containers for fast overlap checks.

With our extra summary notion for distance all types of attention-grabbing questions come to the entrance. We will think about p worth that aren’t integers, say p = π (as you will note within the graphs above). We will additionally think about p ∈ (0,1), say p = 0.3, would that also match into the 4 guidelines we mentioned each norm should obey?

Conclusion

Abstracting the thought of distance can really feel unwieldy, even needlessly theoretical, however distilling it to its core properties frees us to ask questions that may in any other case be unimaginable to border. Doing so reveals new norms with concrete, real-world makes use of. It’s tempting to deal with all distance measures as interchangeable, but small algebraic variations give every norm distinct properties that form the fashions constructed on them. From the bias-variance trade-off in regression to the selection between crisp or blurry photographs in GANs, it issues the way you measure distance.

Let’s join on Linkedin!

Observe me on X = Twitter

Code on Github