Within the area of machine studying, the primary goal is to seek out essentially the most “match” mannequin educated over a specific job or a bunch of duties. To do that, one must optimize the loss/value operate, and this can help in minimizing error. One must know the character of concave and convex features since they’re those that help in optimizing issues successfully. These convex and concave features kind the muse of many machine studying algorithms and affect the minimization of loss for coaching stability. On this article, you’ll study what concave and convex features are, their variations, and the way they affect the optimization methods in machine studying.

What’s a Convex Operate?

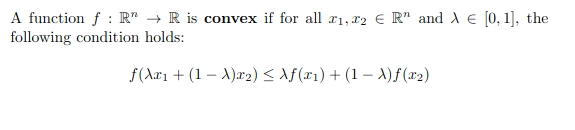

In mathematical phrases, a real-valued operate is convex if the road section between any two factors on the graph of the operate lies above the 2 factors. In easy phrases, the convex operate graph is formed like a “cup “ or “U”.

A operate is alleged to be convex if and provided that the area above its graph is a convex set.

This inequality ensures that features don’t bend downwards. Right here is the attribute curve for a convex operate:

What’s a Concave Operate?

Any operate that’s not a convex operate is alleged to be a concave operate. Mathematically, a concave operate curves downwards or has a number of peaks and valleys. Or if we attempt to join two factors with a section between 2 factors on the graph, then the road lies under the graph itself.

Because of this if any two factors are current within the subset that comprises the entire section becoming a member of them, then it’s a convex operate, in any other case, it’s a concave operate.

This inequality violates the convexity situation. Right here is the attribute curve for a concave operate:

Distinction between Convex and Concave Capabilities

Under are the variations between convex and concave features:

| Side | Convex Capabilities | Concave Capabilities |

|---|---|---|

| Minima/Maxima | Single world minimal | Can have a number of native minima and a neighborhood most |

| Optimization | Simple to optimize with many commonplace methods | Tougher to optimize; commonplace methods could fail to seek out the worldwide minimal |

| Frequent Issues / Surfaces | Clean, easy surfaces (bowl-shaped) | Complicated surfaces with peaks and valleys |

| Examples |

f(x) = x2, f(x) = ex, f(x) = max(0, x) |

f(x) = sin(x) over [0, 2π] |

Optimization in Machine Studying

In machine learning, optimization is the method of iteratively bettering the accuracy of machine studying algorithms, which finally lowers the diploma of error. Machine studying goals to seek out the connection between the enter and the output in supervised studying, and cluster comparable factors collectively in unsupervised studying. Due to this fact, a serious purpose of coaching a machine learning algorithm is to reduce the diploma of error between the expected and true output.

Earlier than continuing additional, we’ve got to know a number of issues, like what the Loss/Value features are and the way they profit in optimizing the machine studying algorithm.

Loss/Value features

Loss operate is the distinction between the precise worth and the expected worth of the machine studying algorithm from a single document. Whereas the associated fee operate aggregated the distinction for all the dataset.

Loss and price features play an vital position in guiding the optimization of a machine studying algorithm. They present quantitatively how nicely the mannequin is performing, which serves as a measure for optimization methods like gradient descent, and the way a lot the mannequin parameters have to be adjusted. By minimizing these values, the mannequin steadily will increase its accuracy by lowering the distinction between predicted and precise values.

Convex Optimization Advantages

Convex features are notably helpful as they’ve a world minima. Because of this if we’re optimizing a convex operate, it’ll at all times make sure that it’ll discover the very best resolution that may decrease the associated fee operate. This makes optimization a lot simpler and extra dependable. Listed here are some key advantages:

- Assurity to seek out World Minima: In convex features, there is just one minima which means the native minima and world minima are similar. This property eases the seek for the optimum resolution since there is no such thing as a want to fret to caught in native minima.

- Sturdy Duality: Convex Optimization reveals that robust duality means the primal resolution of 1 drawback could be simply associated to the related comparable drawback.

- Robustness: The options of the convex features are extra strong to adjustments within the dataset. Usually, the small adjustments within the enter knowledge don’t result in giant adjustments within the optimum options and convex operate simply handles these eventualities.

- Quantity stability: The algorithms of the convex features are sometimes extra numerically steady in comparison with the optimizations, resulting in extra dependable ends in observe.

Challenges With Concave Optimization

The foremost problem that concave optimization faces is the presence of a number of minima and saddle factors. These factors make it tough to seek out the worldwide minima. Listed here are some key challenges in concave features:

- Increased computational value: Because of the deformity of the loss, concave issues typically require extra iterations earlier than optimization to extend the possibilities of discovering higher options. This will increase the time and the computation demand as nicely.

- Native Minima: Concave features can have a number of native minima. So the optimization algorithms can simply get trapped in these suboptimal factors.

- Saddle Factors: Saddle factors are the flat areas the place the gradient is 0, however these factors are neither native minima nor maxima. So the optimization algorithms like gradient descent could get caught there and take an extended time to flee from these factors.

- No Assurity to seek out World Minima: In contrast to the convex features, Concave features don’t assure to seek out the worldwide/optimum resolution. This makes analysis and verification tougher.

- Delicate to initialization/start line: The place to begin influences the ultimate end result of the optimization methods essentially the most. So poor initialization could result in the convergence to a neighborhood minima or a saddle level.

Methods for Optimizing Concave Capabilities

Optimizing a Concave operate could be very difficult due to its a number of native minima, saddle factors, and different points. Nonetheless, there are a number of methods that may enhance the possibilities of discovering optimum options. A few of them are defined under.

- Sensible Initialization: By selecting algorithms like Xavier or HE initialization methods, one can keep away from the problem of start line and scale back the possibilities of getting caught at native minima and saddle factors.

- Use of SGD and Its Variants: SGD (Stochastic Gradient Descent) introduces randomness, which helps the algorithm to keep away from native minima. Additionally, superior methods like Adam, RMSProp, and Momentum can adapt the educational price and assist in stabilizing the convergence.

- Studying Price Scheduling: Studying price is just like the steps to seek out the native minima. So, deciding on the optimum studying price iteratively helps in smoother optimization with methods like step decay and cosine annealing.

- Regularization: Strategies like L1 and L2 regularization, dropout, and batch normalization scale back the possibilities of overfitting. This enhances the robustness and generalization of the mannequin.

- Gradient Clipping: Deep studying faces a serious problem of exploding gradients. Gradient clipping controls this by chopping/capping the gradients earlier than the utmost worth and ensures steady coaching.

Conclusion

Understanding the distinction between convex and concave features is efficient for fixing optimization issues in machine studying. Convex features supply a steady, dependable, and environment friendly path to the worldwide options. Concave features include their complexities, like native minima and saddle factors, which require extra superior and adaptive methods. By deciding on sensible initialization, adaptive optimizers, and higher regularization methods, we will mitigate the challenges of Concave optimization and obtain a better efficiency.

Login to proceed studying and revel in expert-curated content material.