Discover how the chosen samples seize extra assorted writing types and edge circumstances.

In some examples like cluster 1, 3, and eight the furthest level does simply appear like a extra assorted instance of the prototypical middle.

Cluster 6 is an fascinating level, showcasing how some photographs are troublesome even for a human to guess what it’s. However you may nonetheless make out how this might be in a cluster with the centroid as an 8.

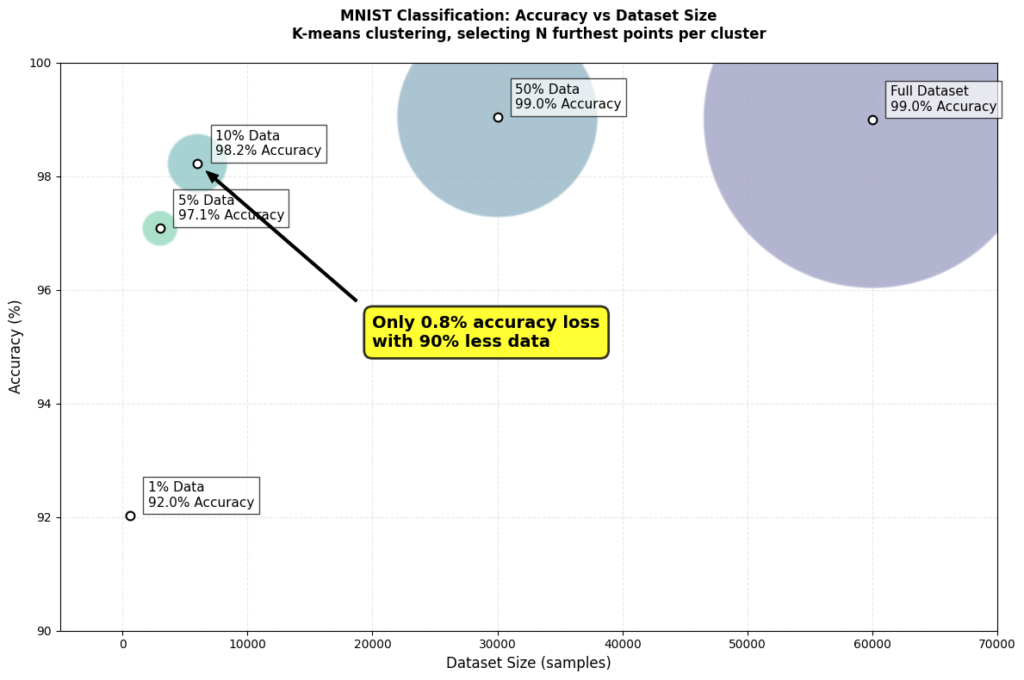

Latest analysis on neural scaling laws helps to elucidate why knowledge pruning utilizing a “furthest-from-centroid” strategy works, particularly on the MNIST dataset.

Information Redundancy

Many coaching examples in massive datasets are extremely redundant.

Take into consideration MNIST: what number of practically similar ‘7’s do we actually want? The important thing to knowledge pruning isn’t having extra examples — it’s having the precise examples.

Choice Technique vs Dataset Measurement

Probably the most fascinating findings from the above paper is how the optimum knowledge choice technique adjustments primarily based in your dataset dimension:

- With “quite a bit” of knowledge : Choose more durable, extra various examples (furthest from cluster facilities).

- With scarce knowledge: Choose simpler, extra typical examples (closest to cluster facilities).

This explains why our “furthest-from-centroid” technique labored so effectively.

With MNIST’s 60,000 coaching examples, we had been within the “considerable knowledge” regime the place choosing various, difficult examples proved most helpful.

Inspiration and Targets

I used to be impressed by these two current papers (and the truth that I’m an information engineer):

Each discover varied methods we will use knowledge choice methods to coach performant fashions on much less knowledge.

Methodology

I used LeNet-5 as my mannequin structure.

Then utilizing one of many methods under I pruned the coaching dataset of MNIST and skilled a mannequin. Testing was achieved in opposition to the complete take a look at set.

Attributable to time constraints, I solely ran 5 assessments per experiment.

Full code and outcomes available here on GitHub.

Technique #1: Baseline, Full Dataset

- Normal LeNet-5 structure

- Educated utilizing 100% of coaching knowledge

Technique #2: Random Sampling

- Randomly pattern particular person photographs from the coaching dataset

Technique #3: Ok-means Clustering with Totally different Choice Methods

Right here’s how this labored:

- Preprocess the pictures with PCA to scale back the dimensionality. This simply means every picture was diminished from 784 values (28×28 pixels) into solely 50 values. PCA does this whereas retaining crucial patterns and eradicating redundant data.

- Cluster utilizing k-means. The variety of clusters was mounted at 50 and 500 in several assessments. My poor CPU couldn’t deal with a lot past 500 given all of the experiments.

- I then examined totally different choice strategies as soon as the information was cluster:

- Closest-to-centroid — these symbolize a “typical” instance of the cluster.

- Furthest-from-centroid — extra consultant of edge circumstances.

- Random from every cluster — randomly choose inside every cluster.

- PCA diminished noise and computation time. At first I used to be simply flattening the pictures. The outcomes and compute each improved utilizing PCA so I stored it for the complete experiment.

- I switched from commonplace Ok-means to MiniBatchKMeans clustering for higher velocity. The usual algorithm was too sluggish for my CPU given all of the assessments.

- Organising a correct take a look at harness was key. Shifting experiment configs to a YAML, mechanically saving outcomes to a file, and having o1 write my visualization code made life a lot simpler.

Median Accuracy & Run Time

Listed below are the median outcomes, evaluating our baseline LeNet-5 skilled on the complete dataset with two totally different methods that used 50% of the dataset.

Accuracy vs Run Time Full Outcomes

The under charts present the outcomes of my 4 pruning methods in comparison with the baseline in pink.

Key findings throughout a number of runs:

- Furthest-from-centroid constantly outperformed different strategies

- There positively is a candy spot between compute time and and mannequin accuracy if you wish to discover it to your use case. Extra work must be achieved right here.

I’m nonetheless shocked that simply randomly lowering the dataset provides acceptable outcomes if effectivity is what you’re after.

Future Plans

- Take a look at this on my second brain. I need to high quality tune a LLM on my full Obsidian and take a look at knowledge pruning together with hierarchical summarization.

- Discover different embedding strategies for clustering. I can attempt coaching an auto-encoder to embed the pictures somewhat than use PCA.

- Take a look at this on extra complicated and bigger datasets (CIFAR-10, ImageNet).

- Experiment with how mannequin structure impacts the efficiency of knowledge pruning methods.

These findings recommend we have to rethink our strategy to dataset curation:

- Extra knowledge isn’t at all times higher — there appears to be diminishing returns to greater knowledge/ greater fashions.

- Strategic pruning can really enhance outcomes.

- The optimum technique relies on your beginning dataset dimension.

As folks begin sounding the alarm that we’re working out of knowledge, I can’t assist however marvel if much less knowledge is definitely the important thing to helpful, cost-effective fashions.

I intend to proceed exploring the house, please attain out for those who discover this fascinating — glad to attach and discuss extra 🙂