Giant Languange Fashions are nice however they’ve a slight downside that they use softmax consideration which might be computationally intensive. On this article we are going to discover if there’s a method we will change the softmax in some way to attain linear time complexity.

I’m gonna assume you already find out about stuff like ChatGPT, Claude, and the way transformers work in these fashions. Effectively consideration is the spine of such fashions. If we consider regular RNNs, we encode all previous states in some hidden state after which use that hidden state together with new question to get our output. A transparent downside right here is that effectively you’ll be able to’t retailer every thing in only a small hidden state. That is the place consideration helps, think about for every new question you could possibly discover probably the most related previous knowledge and use that to make your prediction. That’s basically what consideration does.

Consideration mechanism in transformers (the structure behind most present language fashions) contain key, question and values embeddings. The eye mechanism in transformers works by matching queries in opposition to keys to retrieve related values. For every question(Q), the mannequin computes similarity scores with all accessible keys(Okay), then makes use of these scores to create a weighted mixture of the corresponding values(Y). This consideration calculation might be expressed as:

This mechanism allows the mannequin to selectively retrieve and make the most of info from its total context when making predictions. We use softmax right here because it successfully converts uncooked similarity scores into normalized possibilities, performing just like a k-nearest neighbor mechanism the place larger consideration weights are assigned to extra related keys.

Okay now let’s see the computational price of 1 consideration layer,

Softmax Disadvantage

From above, we will see that we have to compute softmax for an NxN matrix, and thus, our computation price turns into quadratic in sequence size. That is high quality for shorter sequences, however it turns into extraordinarily computationally inefficient for lengthy sequences, N=100k+.

This provides us our motivation: can we cut back this computational price? That is the place linear consideration is available in.

Launched by Katharopoulos et al., linear consideration makes use of a intelligent trick the place we write the softmax exponential as a kernel perform, expressed as dot merchandise of characteristic maps φ(x). Utilizing the associative property of matrix multiplication, we will then rewrite the eye computation to be linear. The picture under illustrates this transformation:

Katharopoulos et al. used elu(x) + 1 as φ(x), however any kernel characteristic map that may successfully approximate the exponential similarity can be utilized. The computational price of above might be written as,

This eliminates the necessity to compute the complete N×N consideration matrix and reduces complexity to O(Nd²). The place d is the embedding dimension and this in impact is linear complexity when N >>> d, which is normally the case with Giant Language Fashions

Okay let’s have a look at the recurrent view of linear consideration,

Okay why can we do that in linear consideration and never in softmax? Effectively softmax is just not seperable so we will’t actually write it as product of seperate phrases. A pleasant factor to notice right here is that in decoding, we solely must hold monitor of S_(n-1), giving us O(d²) complexity per token era since S is a d × d matrix.

Nonetheless, this effectivity comes with an essential downside. Since S_(n-1) can solely retailer d² info (being a d × d matrix), we face a basic limitation. As an illustration, in case your unique context size requires storing 20d² value of knowledge, you’ll basically lose 19d² value of knowledge within the compression. This illustrates the core memory-efficiency tradeoff in linear consideration: we achieve computational effectivity by sustaining solely a fixed-size state matrix, however this similar fastened measurement limits how a lot context info we will protect and this offers us the motivation for gating.

Okay, so we’ve established that we’ll inevitably neglect info when optimizing for effectivity with a fixed-size state matrix. This raises an essential query: can we be good about what we bear in mind? That is the place gating is available in — researchers use it as a mechanism to selectively retain essential info, making an attempt to reduce the affect of reminiscence loss by being strategic about what info to maintain in our restricted state. Gating isn’t a brand new idea and has been extensively utilized in architectures like LSTM

The essential change right here is in the way in which we formulate Sn,

There are a lot of decisions for G all which result in totally different fashions,

A key benefit of this structure is that the gating perform relies upon solely on the present token x and learnable parameters, moderately than on your complete sequence historical past. Since every token’s gating computation is unbiased, this enables for environment friendly parallel processing throughout coaching — all gating computations throughout the sequence might be carried out concurrently.

Once we take into consideration processing sequences like textual content or time sequence, our minds normally soar to consideration mechanisms or RNNs. However what if we took a very totally different method? As a substitute of treating sequences as, effectively, sequences, what if we processed them extra like how CNNs deal with pictures utilizing convolutions?

State Area Fashions (SSMs) formalize this method via a discrete linear time-invariant system:

Okay now let’s see how this pertains to convolution,

the place F is our discovered filter derived from parameters (A, B, c), and * denotes convolution.

H3 implements this state area formulation via a novel structured structure consisting of two complementary SSM layers.

Right here we take the enter and break it into 3 channels to mimic Okay, Q and V. We then use 2 SSM and a couple of gating to sort of imitate linear consideration and it seems that this sort of structure works fairly effectively in observe.

Earlier, we noticed how gated linear consideration improved upon customary linear consideration by making the data retention course of data-dependent. An identical limitation exists in State Area Fashions — the parameters A, B, and c that govern state transitions and outputs are fastened and data-independent. This implies each enter is processed via the identical static system, no matter its significance or context.

we will prolong SSMs by making them data-dependent via time-varying dynamical techniques:

The important thing query turns into find out how to parametrize c_t, b_t, and A_t to be capabilities of the enter. Totally different parameterizations can result in architectures that approximate both linear or gated consideration mechanisms.

Mamba implements this time-varying state area formulation via selective SSM blocks.

Mamba right here makes use of Selective SSM as an alternative of SSM and makes use of output gating and extra convolution to enhance efficiency. This can be a very high-level concept explaining how Mamba combines these parts into an environment friendly structure for sequence modeling.

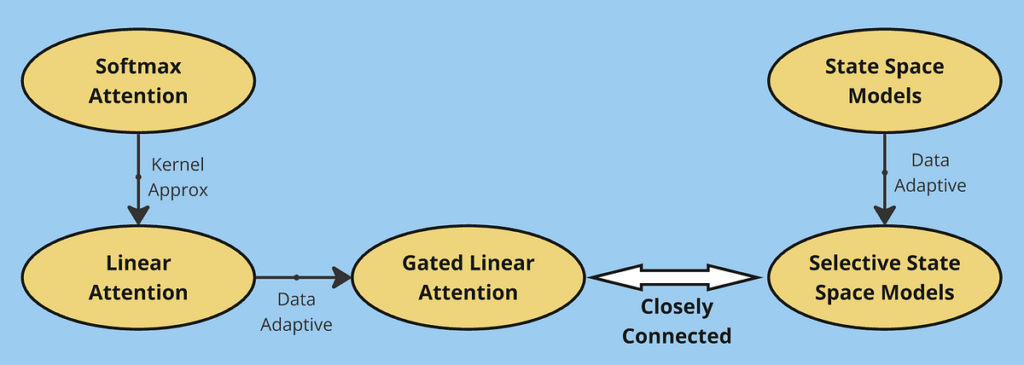

On this article, we explored the evolution of environment friendly sequence modeling architectures. Beginning with conventional softmax consideration, we recognized its quadratic complexity limitation, which led to the event of linear consideration. By rewriting consideration utilizing kernel capabilities, linear consideration achieved O(Nd²) complexity however confronted reminiscence limitations as a result of its fixed-size state matrix.

This limitation motivated gated linear consideration, which launched selective info retention via gating mechanisms. We then explored another perspective via State Area Fashions, displaying how they course of sequences utilizing convolution-like operations. The development from fundamental SSMs to time-varying techniques and at last to selective SSMs parallels our journey from linear to gated consideration — in each instances, making the fashions extra adaptive to enter knowledge proved essential for efficiency.

By means of these developments, we see a standard theme: the basic trade-off between computational effectivity and reminiscence capability. Softmax consideration excels at in-context studying by sustaining full consideration over your complete sequence, however at the price of quadratic complexity. Linear variants (together with SSMs) obtain environment friendly computation via fixed-size state representations, however this similar optimization limits their potential to take care of detailed reminiscence of previous context. This trade-off continues to be a central problem in sequence modeling, driving the seek for architectures that may higher stability these competing calls for.

To learn extra on this matters, i might recommend the next papers:

Linear Consideration: Katharopoulos, Angelos, et al. “Transformers are rnns: Fast autoregressive transformers with linear attention.” International conference on machine learning. PMLR, 2020.

This weblog put up was impressed by coursework from my graduate research throughout Fall 2024 at College of Michigan. Whereas the programs offered the foundational information and motivation to discover these matters, any errors or misinterpretations on this article are totally my very own. This represents my private understanding and exploration of the fabric.