In “Model Context Protocol (MCP) Tutorial: Build Your First MCP Server in 6 Steps”, we launched the MCP structure and explored MCP servers intimately. We are going to proceed our MCP exploration on this tutorial, by constructing an interactive MCP consumer interface utilizing Streamlit. The principle distinction between an MCP server and an MCP consumer is that MCP server offers functionalities by connecting to a various vary of instruments and assets, whereas MCP consumer leverages these functionalities by way of an interface. Streamlit, a light-weight Python library for data-driven interactive internet functions growth, accelerates the event cycle and abstracts away frontend frameworks, making it an optimum selection for speedy prototyping and the streamlined deployment of AI-powered instruments. Subsequently, we’re going to use Streamlit to assemble our MCP consumer person interface by way of a minimal setup, whereas specializing in connecting to distant MCP servers for exploring numerous AI functionalities.

Mission Overview

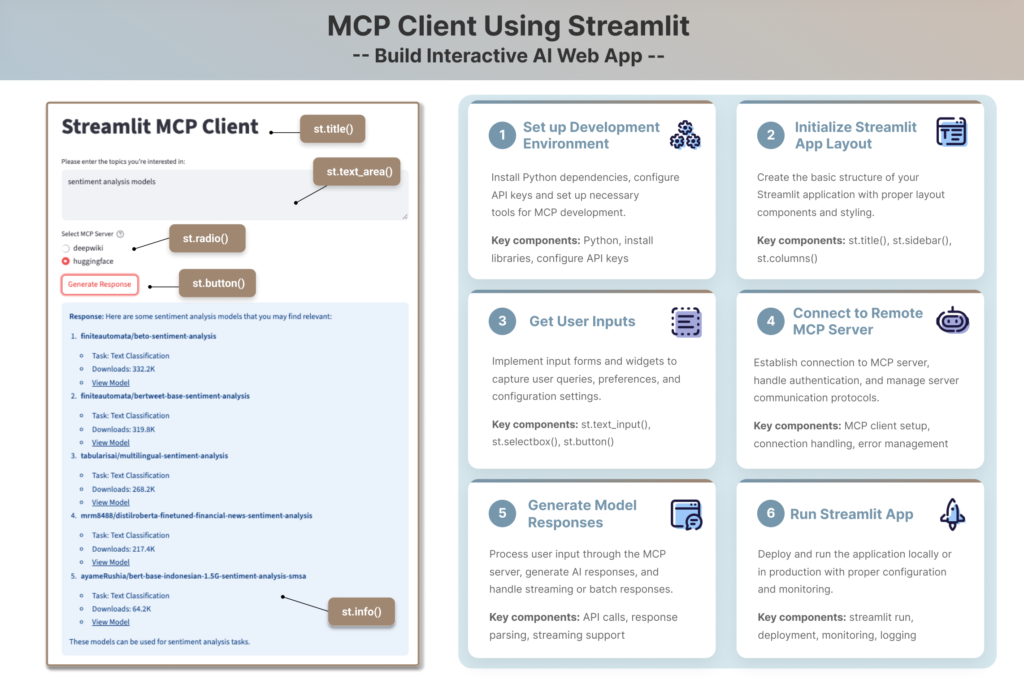

Create an interactive internet app prototype the place customers can enter their matters of curiosity and select between two MCP servers—DeepWiki and HuggingFace—that present related assets. DeepWiki makes a speciality of summarizing codebases and GitHub repositories, whereas the HuggingFace MCP server offers suggestions of open-source datasets and fashions associated to the person’s matters. The picture beneath shows the net app’s output for the subject “sentiment evaluation”.

To develop the Streamlit MCP consumer, we’ll break it down into the next steps:

- Set Up Growth Atmosphere

- Initialize Streamlit App Structure

- Get Consumer Inputs

- Hook up with Distant MCP Servers

- Generate Mannequin Responses

- Run the Streamlit App

Set Up Growth Atmosphere

Firstly, let’s arrange our challenge listing utilizing a easy construction.

mcp_streamlit_client/

├── .env # Atmosphere variables (API keys)

├── README.md # Mission documentation

├── necessities.txt # Required libraries and dependencies

└── app.py # Most important Streamlit utilityThen set up crucial libraries – we’d like streamlit for constructing the net interface and openai for interacting with OpenAI’s API that helps MCP.

pip set up streamlit openaiAlternatively, you may create a necessities.txt file to specify the library variations for reproducible installations by working:

pip set up -r necessities.txtSecondly, safe your API Keys utilizing atmosphere variables. When working with LLM suppliers like OpenAI, you will have to arrange an API key. To maintain this key confidential, the perfect observe is to make use of atmosphere variables to load the API key and keep away from exhausting coding it immediately into your script, particularly when you plan to share your code or deploy your utility. To do that, add your API keys within the .env file utilizing the next format. We may even want Hugging Face API token to entry its distant MCP server.

OPENAI_API_KEY="your_openai_api_key_here"

HF_API_KEY="your_huggingface_api_key_here" Now, within the script app.py, you may load these variables into your utility’s atmosphere utilizing load_dotenv() from the dotenv library. This operate reads the key-value pairs out of your .env file and makes them accessible through os.getenv().

from dotenv import load_dotenv

import os

load_dotenv()

# entry HuggingFace API key utilizing os.getenv()

HF_API_KEY = os.getenv('HF_API_KEY')Join MCP Server and Consumer

Earlier than diving into MCP consumer growth, let’s perceive the fundamentals of creating an MCP server-client connection. With the rising reputation of MCP, an growing variety of LLM suppliers now help MCP consumer implementation. For instance, OpenAI provides an easy initialization technique utilizing the code beneath.

from openai import OpenAI

consumer = OpenAI()Additional Studying:

The article “For Client Developers” offers the instance to arrange an Anthropic MCP consumer, which is barely difficult but in addition extra strong because it allows higher session and useful resource administration.

To attach the consumer to an MCP server, you’ll have to implement a connection technique that takes both a server script path (for native MCP servers) or URL (for distant MCP servers) because the enter. Native MCP servers are applications that run in your native machine, whereas distant MCP servers are deployed on-line and accessible by way of a URL. For instance beneath, we’re connecting to a distant MCP server “DeepWiki” by way of “https://mcp.deepwiki.com/mcp”.

response = consumer.responses.create(

mannequin="gpt-4.1",

instruments=[

{

"type": "mcp",

"server_label": "deepwiki",

"server_url": "https://mcp.deepwiki.com/mcp",

"require_approval": "never",

},

]

)Additional Readings:

You can even discover different MCP server choices on this complete “Remote MCP Servers Catalogue” on your particular wants. The article “For Client Developers” additionally offers instance to attach native MCP servers.

Construct the Streamlit MCP Consumer

Now that we perceive the basics of creating connections between MCP purchasers and servers, we’ll encapsulate this performance inside an internet interface for enhanced person expertise. This internet app is designed with modularity in thoughts, composed of a number of components applied utilizing Streamlit strategies, akin to st.radio(), st.button(), st.information(), st.title() and st.text_area().

1. Initialize Your Streamlit Web page

We are going to begin with initialize_page() operate that units the web page icon and title, and makes use of structure="centered" to make sure the general internet app structure to be aligned within the heart. This operate returns a column object beneath the web page title the place we’ll place widgets proven within the following steps.

import streamlit as st

def initialize_page():

"""Initialize the Streamlit web page configuration and structure"""

st.set_page_config(

page_icon="🤖", # A robotic emoji because the web page icon

structure="centered" # Heart the content material on the web page

)

st.title("Streamlit MCP Consumer") # Set the primary title of the app

# Return a column object which can be utilized to put widgets

return st.columns(1)[0]2. Get Consumer Inputs

The get_user_input() operate permits customers to supply their enter, by making a textual content space widget utilizing st.text_area(). The top parameter ensures the enter field is sufficiently sized, and the placeholder textual content prompts the person with particular directions.

def get_user_input(column):

"""Deal with transcript enter strategies and return the transcript textual content"""

user_text = column.text_area(

"Please enter the matters you’re all in favour of:",

top=100,

placeholder="Kind it right here..."

)

return user_text3. Hook up with MCP Servers

The create_mcp_server_dropdown() operate facilitates the flexibleness to select from a variety of MCP servers. It defines a dictionary of obtainable MCP servers, mapping a label (like “deepwiki” or “huggingface”) to its corresponding server URL. Streamlit’s st.radio() widget shows these choices as radio buttons for customers to select from. This operate then returns each the chosen server’s label and its URL, feeding into the following step to generate responses.

def create_mcp_server_dropdown():

# Outline a listing of MCP servers with their labels and URLs

mcp_servers = {

"deepwiki": "https://mcp.deepwiki.com/mcp",

"huggingface": "https://huggingface.co/mcp"

}

# Create a radio button for choosing the MCP server

selected_server = st.radio(

"Choose MCP Server",

choices=record(mcp_servers.keys()),

assist="Select the MCP server you wish to hook up with"

)

# Get the URL equivalent to the chosen server

server_url = mcp_servers[selected_server]

return selected_server, server_url4. Generate Responses

Earlier we see the way to use consumer.responses.create() as an ordinary option to generate responses. The generate_response() operate beneath extends this by passing a number of customized parameters:

mannequin: select the LLM mannequin that matches your price range and goal.instruments: decided by the person chosen MCP server URL. On this case, since Hugging Face server requires person authentication, we additionally specify the API key within the instrument configuration and show an error message when the secret’s not discovered.enter: this combines person’s question and tool-specific directions

to supply clear context for the immediate.

The person’s enter is then despatched to the LLM, which leverages the chosen MCP server as an exterior instrument to meet the request. And show the responses utilizing the Streamlit information widget st.information(). In any other case, it’ll return an error message utilizing st.error() when no responses are produced.

from openai import OpenAI

import os

load_dotenv()

HF_API_KEY = os.getenv('HF_API_KEY')

def generate_response(user_text, selected_server, server_url):

"""Generate response utilizing OpenAI consumer and MCP instruments"""

consumer = OpenAI()

strive:

mcp_tool = {

"kind": "mcp",

"server_label": selected_server,

"server_url": server_url,

"require_approval": "by no means",

}

if selected_server == 'huggingface':

if HF_API_KEY:

mcp_tool["headers"] = {"Authorization": f"Bearer {HF_API_KEY}"}

else:

st.warning("Hugging Face API Key not present in .env. Some functionalities is perhaps restricted.")

prompt_text = f"Record some assets related to this subject: {user_text}?"

else:

prompt_text = f"Summarize codebase contents related to this subject: {user_text}?"

response = consumer.responses.create(

mannequin="gpt-3.5-turbo",

instruments=[mcp_tool],

enter=prompt_text

)

st.information(

f"""

**Response:**

{response.output_text}

"""

)

return response

besides Exception as e:

st.error(f"Error producing response: {str(e)}")

return None5. Outline the Most important Perform

The ultimate step is to create a major() operate that chains all operations collectively. This operate sequentially calls initialize_page(), get_user_input(), and create_mcp_server_dropdown() to arrange the UI and acquire person inputs. It then creates a situation to set off generate_response() when the person clicks st.button("Generate Response"). Upon clicking, the operate checks if person enter exists, shows a spinner with st.spinner() to indicate progress, and returns the response. If no enter is offered, the app shows a warning message as an alternative of calling generate_response(), stopping pointless token utilization and further prices.

def major():

# 1. Initialize the web page structure

main_column = initialize_page()

# 2. Get person enter for the subject

user_text = get_user_input(main_column)

# 3. Enable person to pick the MCP server

with main_column: # Place the radio buttons inside the primary column

selected_server, server_url = create_mcp_server_dropdown()

# 4. Add a button to set off the response era

if st.button("Generate Response", key="generate_button"):

if user_text:

with st.spinner("Producing response..."):

generate_response(user_text, selected_server, server_url)

else:

st.warning("Please enter a subject first.")Run Streamlit Software

Lastly, an ordinary Python script entry level ensures that our major operate is executed when the script is run.

if __name__ == "__main__":

major()Open your terminal or command immediate, navigate to the listing the place you saved the file, and run:

streamlit run app.pyIn case you are growing your app domestically, an area Streamlit server will spin up and your app will open in a brand new tab in your default internet browser. Alternatively, if you’re growing in a cloud atmosphere, akin to AWS JupyterLab, substitute the default URL with this format: https://.

Lastly, yow will discover the code in our GitHub repository “mcp-streamlit-client” and discover your Streamlit MCP consumer by attempting out completely different matters.

Take-House Message

In our earlier article, we launched the MCP structure and centered on the MCP server. Constructing on this basis, we now discover implementing an MCP consumer with Streamlit to reinforce the instrument calling capabilities of distant MCP servers. This information offers important steps—from organising your growth atmosphere and securing API keys, dealing with person enter, connecting to distant MCP servers, and displaying AI-generated responses. To arrange this utility for manufacturing, contemplate these subsequent steps:

- Asynchronous processing of a number of consumer requests

- Caching mechanisms for quicker response instances

- Session state administration

- Consumer authentication and entry administration