The search for Synthetic Common Intelligence (AGI) typically appears like scaling an impossibly excessive mountain. Probably the most difficult faces of this mountain is reasoning — the power for AI not simply to recall info or mimic patterns, however to assume logically, resolve complicated issues step-by-step, and perceive trigger and impact. Not too long ago, we’ve seen glimpses of spectacular reasoning capabilities from giant language fashions (LLMs) rising from closed-door labs, like OpenAI’s GPT-4 sequence and DeepSeek’s R1-Zero. These fashions trace at a future the place AI can deal with refined scientific, mathematical, and logical challenges.

However what if the trail to superior reasoning doesn’t require hyper-complex, proprietary methods? What if a less complicated, extra accessible, and open method may obtain comparable and even superior outcomes, sooner?

Enter Open-Reasoner-Zero (ORZ).

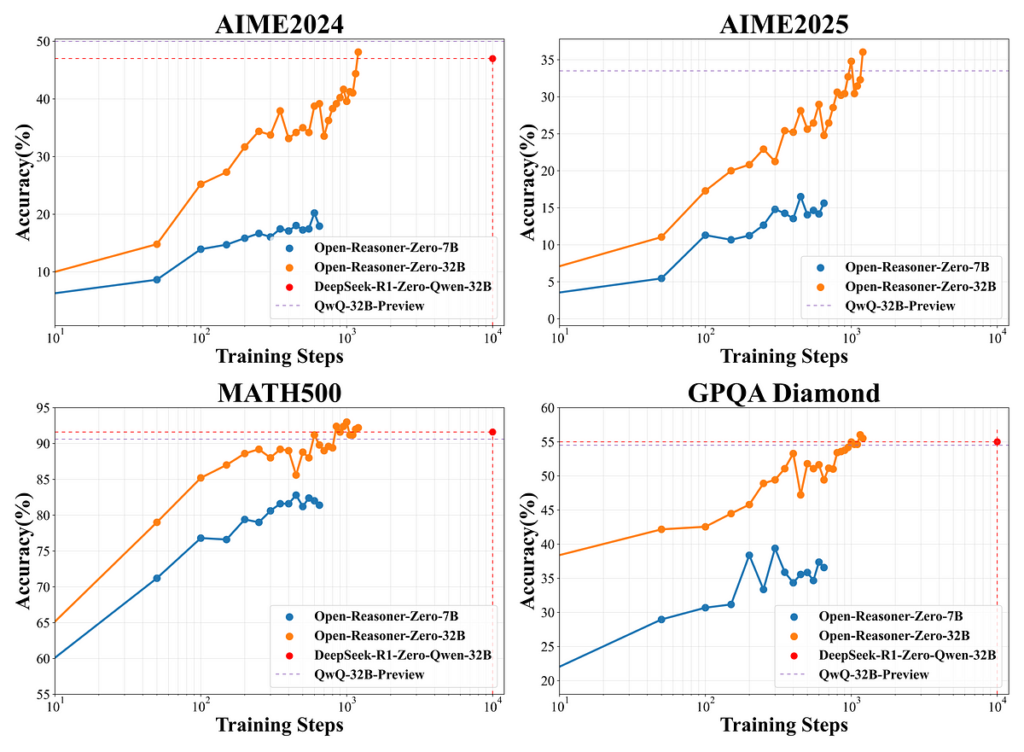

This groundbreaking open-source undertaking challenges typical knowledge in coaching reasoning fashions. The ORZ workforce demonstrates {that a} surprisingly minimalist method, leveraging vanilla Reinforcement Studying (RL) instantly on a base LLM, can unlock exceptional reasoning efficiency. Much more impressively, it achieves this whereas scaling effectively throughout mannequin sizes and requiring considerably fewer computational assets — generally needing solely a tenth of the coaching steps in comparison with different superior pipelines.

On this deep dive, we’ll unpack the philosophy, methodology, and beautiful outcomes of Open-Reasoner-Zero. We’ll discover how its “much less is extra” recipe works, look at the proof backing its claims, and focus on its profound implications for the way forward for AI analysis and the open-source group. Get able to discover a brand new, accessible path up the reasoning mountain.

For years, LLMs have dazzled us with their fluency, information recall, and inventive writing talents. Nonetheless, true reasoning has remained a big hurdle. Customary LLM coaching (pre-training on huge textual content + supervised fine-tuning) typically struggles with duties requiring:

- Multi-step logical deduction: Following a sequence of reasoning like in mathematical proofs or complicated planning.

- Mathematical problem-solving: Past easy arithmetic, tackling algebra, calculus, or…