1. Analysis is the cake, not the icing.

Analysis has all the time been essential in ML improvement, LLM or not. However I’d argue that it’s additional essential in LLM improvement for 2 causes:

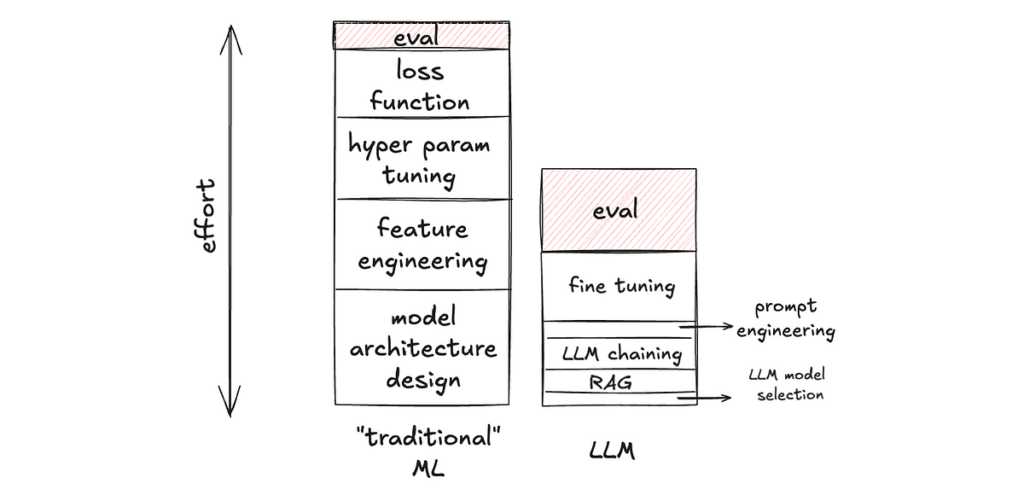

a) The relative significance of eval goes up, as a result of there are decrease levels of freedom in constructing LLM functions, making time spent non-eval work go down. In LLM improvement, constructing on high of foundational fashions reminiscent of OpenAI’s GPT or Anthropic’s Claude fashions, there are fewer knobs out there to tweak within the utility layer. And these knobs are a lot sooner to tweak (caveat: sooner to tweak, not essentially sooner to get it proper). For instance, altering the immediate is arguably a lot sooner to implement than writing a brand new hand-crafted characteristic for a Gradient-Boosted Resolution Tree. Thus, there’s much less non-eval work to do, making the proportion of time spent on eval go up.

b) The absolute significance of eval goes up, as a result of there are increased levels of freedom within the output of generative AI, making eval a extra complicated activity. In distinction with classification or rating duties, generative AI duties (e.g. write an essay about X, make a picture of Y, generate a trajectory for an autonomous automobile) can have an infinite variety of acceptable outputs. Thus, the measurement is a strategy of projecting a high-dimensional house into decrease dimensions. For instance, for an LLM activity, one can measure: “Is output textual content factual?”, “Does the output include dangerous content material?”, “Is the language concise?”, “Does it begin with ‘actually!’ too usually?”, and many others. If precision and recall in a binary classification activity are loss-less measurements of these binary outputs (measuring what you see), the instance metrics I listed earlier for an LLM activity are lossy measurements of the output textual content (measuring a low-dimensional illustration of what you see). And that’s a lot more durable to get proper.

This paradigm shift has sensible implications on staff sizing and hiring when staffing a mission on LLM utility.

2. Benchmark the distinction.

That is the dream state of affairs: we climb on a goal metric and maintain bettering on it.

The truth?

You may barely draw greater than 2 consecutive factors within the graph!

These may sound acquainted to you:

After the first launch, we acquired a a lot larger dataset, so the brand new metric quantity is not an apple-to-apple comparability with the outdated quantity. And we will’t re-run the outdated mannequin on the brand new dataset — perhaps different components of the system have upgraded and we will’t take a look at the outdated commit to breed the outdated mannequin; perhaps the eval metric is an LLM-as-a-judge and the dataset is big, so every eval run is prohibitively costly, and many others.

After the 2nd launch, we determined to vary the output schema. For instance, beforehand, we instructed the mannequin to output a sure / no reply; now we instruct the mannequin to output sure / no / perhaps / I don’t know. So the beforehand rigorously curated floor reality set is not legitimate.

After the third launch, we determined to interrupt the one LLM calls right into a composite of two calls, and we have to consider the sub-component. We want new datasets for sub-component eval.

….

The purpose is the event cycle within the age of LLMs is commonly too quick for longitudinal monitoring of the identical metric.

So what’s the answer?

Measure the delta.

In different phrases, make peace with having simply two consecutive factors on that graph. The thought is to ensure every mannequin model is best than the earlier model (to one of the best of your data at that cut-off date), although it’s fairly onerous to know the place its efficiency stands in absolute phrases.

Suppose I’ve an LLM-based language tutor that first classifies the enter as English or Spanish, after which presents grammar ideas. A easy metric will be the accuracy of the “English / Spanish” label. Now, say I made some adjustments to the immediate and need to know whether or not the brand new immediate improves accuracy. As an alternative of hand-labeling a big information set and computing accuracy on it, one other means is to only deal with the info factors the place the outdated and new prompts produce totally different labels. I received’t be capable of know absolutely the accuracy of both mannequin this fashion, however I’ll know which mannequin has increased accuracy.

I ought to make clear that I’m not saying benchmarking absolutely the has no deserves. I’m solely saying we must be cognizant of the price of doing so, and benchmarking the delta — albeit not a full substitute — generally is a far more cost-effective strategy to get a directional conclusion. One of many extra elementary causes for this paradigm shift is that if you’re constructing your ML mannequin from scratch, you usually should curate a big coaching set anyway, so the eval dataset can usually be a byproduct of that. This isn’t the case with zero-shot and few-shots studying on pre-trained fashions (reminiscent of LLMs).

As a second instance, maybe I’ve an LLM-based metric: we use a separate LLM to guage whether or not the reason produced in my LLM language tutor is obvious sufficient. One may ask, “Because the eval is automated now, is benchmarking the delta nonetheless cheaper than benchmarking absolutely the?” Sure. As a result of the metric is extra sophisticated now, you’ll be able to maintain bettering the metric itself (e.g. immediate engineering the LLM-based metric). For one, we nonetheless have to eval the eval; benchmarking the deltas tells you whether or not the brand new metric model is best. For one more, because the LLM-based metric evolves, we don’t should sweat over backfilling benchmark outcomes of all of the outdated variations of the LLM language tutor with the brand new LLM-based metric model, if we solely deal with evaluating two adjoining variations of the LLM language tutor fashions.

Benchmarking the deltas will be an efficient inner-loop, fast-iteration mechanism, whereas saving the dearer means of benchmarking absolutely the or longitudinal monitoring for the outer-loop, lower-cadence iterations.

3. Embrace human triage as an integral a part of eval.

As mentioned above, the dream of rigorously triaging a golden set once-and-for-all such that it may be used as an evergreen benchmark will be unattainable. Triaging can be an integral, steady a part of the event course of, whether or not it’s triaging the LLM output immediately, or triaging these LLM-as-judges or different kinds of extra complicated metrics. We should always proceed to make eval as scalable as potential; the purpose right here is that regardless of that, we must always not anticipate the elimination of human triage. The earlier we come to phrases with this, the earlier we will make the best investments in tooling.

As such, no matter eval instruments we use, in-house or not, there must be a simple interface for human triage. A easy interface can appear like the next. Mixed with the purpose earlier on benchmarking the distinction, it has a side-by-side panel, and you may simply flip by means of the outcomes. It additionally ought to help you simply document your triaged notes such that they are often recycled as golden labels for future benchmarking (and therefore scale back future triage load).

A extra superior model ideally can be a blind take a look at, the place it’s unknown to the triager which facet is which. We’ve repeatedly confirmed with information that when not doing blind testing, builders, even with one of the best intentions, have unconscious bias, favoring the model they developed.