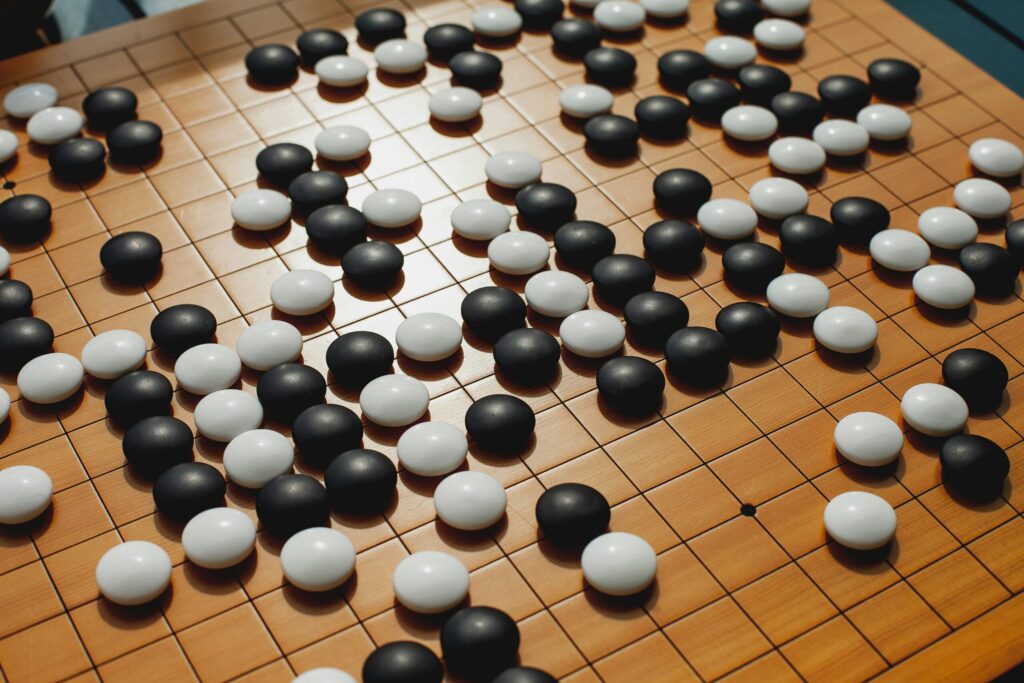

In , Go world champion Lee Sedol confronted an opponent who was not made from flesh and blood – however of strains of code.

It quickly turned clear that the human had misplaced.

Ultimately, Lee Sedol misplaced 4:1.

Final week I watched the documentary AlphaGo once more — and located it fascinating as soon as extra.

The scary factor about it? AlphaGo didn’t get its fashion of play from databases, guidelines or technique books.

As a substitute, it had performed in opposition to itself thousands and thousands of occasions — and realized how you can win within the course of.

Transfer 37 in recreation 2 was the second when the entire world understood: This AI doesn’t play like a human — it performs higher.

AlphaGo mixed supervised studying, reinforcement studying, and search. One fascinating half is, its technique emerged from studying by enjoying in opposition to itself — utilizing reinforcement studying to enhance over time.

We now use reinforcement studying not solely in video games, but additionally in robotics, comparable to gripper arms or family robots, in power optimization, e.g. to scale back the power consumption of knowledge facilities or for visitors management, e.g. by means of visitors gentle optimization.

And in addition in fashionable brokers, we now use giant language fashions along with reinforcement studying (e.g. Reinforcement Studying from Human Suggestions) to make the responses of ChatGPT, Claude, or Gemini extra human-like, for instance.

On this article, I’ll present you precisely how this works, and the way we will higher perceive the mechanism utilizing a easy recreation: Tic Tac Toe.

What’s reinforcement studying?

After we observe a child studying to stroll, we see: It stands up, falls over, tries once more — and in some unspecified time in the future takes its first steps.

No trainer exhibits the infant how you can do it. As a substitute, the infant tries out totally different actions by trial and error to stroll — .

When it may possibly stand or stroll just a few steps, this can be a reward for the infant. In spite of everything, its objective is to have the ability to stroll. If it falls down, there isn’t a reward.

This studying strategy of trial, error and reward is the fundamental thought behind reinforcement studying (RL).

Reinforcement studying is a studying method during which an agent learns by means of interplay with its setting, which actions result in rewards.

Its objective: To acquire as many rewards as doable in the long run.

- In distinction to supervised studying, there aren’t any “proper solutions” or labels. The agent has to seek out out for itself which selections are good.

- In distinction to unsupervised studying, the purpose is to not discover hidden patterns within the information, however to hold out these actions that maximize the reward.

How an RL agent thinks, decides — and learns

For an RL agent to study, it wants 4 issues: An thought of the place it at the moment is (state), what it may possibly do (actions), what it desires to attain (reward) and the way properly it has accomplished with a technique previously (worth).

An agent acts, will get suggestions, and will get higher.

For this to work, 4 issues are wanted:

1) Coverage / Technique

That is the rule or technique in keeping with which an agent decides which motion to carry out in a sure state. In easy circumstances, this can be a lookup desk. In additional advanced purposes (e.g. with neural networks), it’s a perform.

2) Reward sign

The reward is the suggestions from the setting. For instance, this may be +1 for a win, 0 for a draw and -1 for a loss. The agent’s objective is to gather as many rewards as doable over as many steps as doable.

3) Worth Perform

This perform estimates the anticipated future reward of a state. The reward exhibits the agent whether or not the motion was “good” or “dangerous”. The worth perform estimates how good a state is — not simply instantly, however contemplating future rewards the agent can anticipate from that state onward. The worth perform due to this fact estimates the long-term advantage of a state.

4) Mannequin of the setting

A mannequin tells the agent: “If I do motion A in state S, I’ll in all probability find yourself in state S′ and get reward R. ”

In model-free strategies like Q-learning, nonetheless, this isn’t mandatory.

Exploitation vs. Exploration: Transfer 37 – And what we will study from it

Chances are you’ll keep in mind transfer 37 from recreation 2 between AlphaGo and Lee Sedol:

An uncommon transfer that appeared like a mistake to us people – however was later hailed as genius.

Why did the algorithm try this?

The pc program was attempting out one thing new. That is known as exploration.

Reinforcement studying wants each: An agent should discover a stability between exploitation and exploration.

- Exploitation signifies that the agent makes use of the actions it already is aware of.

- Exploration, alternatively, are actions that the agent tries out for the primary time. It tries them out as a result of they could possibly be higher than the actions it already is aware of.

The agent tries to seek out the optimum technique by means of trial and error.

Tic-Tac-Toe with reinforcement studying

Let’s check out reinforcement studying with a brilliant well-known recreation.

You’ve in all probability performed it as a baby too: Tic Tac Toe.

The sport is ideal as an introductory instance, because it doesn’t require a neural community, the foundations are clear and we will implement the sport with just a bit Python:

- Our agent begins with zero information of the sport. It begins like a human seeing the sport for the primary time.

- The agent regularly evaluates every recreation state of affairs: A rating of 0.5 means “I don’t know but whether or not I’m going to win right here.” A 1.0 means “This case will nearly actually result in victory.

- By enjoying many events, the agent observes what works – and adapts his technique.

The objective? For every flip, the agent ought to select the motion that results in the very best long-term reward.

On this part, we’ll construct such an RL system step-by-step and create the file TicTacToeRL.py.

→ You could find all of the code on this GitHub repository.

1. Constructing the setting of the sport

In reinforcement studying, an agent learns by means of interactions with an setting. It determines what a state is (e.g. the present board), which actions are permitted (e.g. the place you’ll be able to place a guess) and what suggestions there may be on an motion (e.g. a reward of +1 for those who win).

In concept, we consult with this setup because the Markov Resolution Course of: A mannequin consists of states, actions and rewards.

First, we create a category TicTacToe. This manages the sport board, which we create as a 3×3 NumPy array, and manages the sport logic:

- The reset(self) perform begins a brand new recreation.

- The perform available_actions() returns all free fields.

- The perform step(self, motion, participant) executes a recreation transfer. Right here we return the brand new state, a reward (1 = win, 0.5 = draw, -10 = invalid transfer) and the sport standing. We penalize invalid strikes on this instance with -10 closely in order that the agent learns to keep away from them shortly – a common technique in small RL environments.

- The perform check_winner() checks whether or not a participant has three X’s or O’s in a row and has due to this fact received.

- With render_gui() we show the present board with matplotlib as X and O graphics.

import numpy as np

import matplotlib

matplotlib.use('TkAgg')

import matplotlib.pyplot as plt

import random

from collections import defaultdict

# Tic Tac Toe Spielumgebung

class TicTacToe:

def __init__(self):

self.board = np.zeros((3, 3), dtype=int)

self.accomplished = False

self.winner = None

def reset(self):

self.board[:] = 0

self.accomplished = False

self.winner = None

return self.get_state()

def get_state(self):

return tuple(self.board.flatten())

def available_actions(self):

return [(i, j) for i in range(3) for j in range(3) if self.board[i, j] == 0]

def step(self, motion, participant):

if self.accomplished:

elevate ValueError("Spiel ist vorbei")

i, j = motion

if self.board[i, j] != 0:

return self.get_state(), -10, True

self.board[i, j] = participant

if self.check_winner(participant):

self.accomplished = True

self.winner = participant

return self.get_state(), 1, True

elif not self.available_actions():

self.accomplished = True

return self.get_state(), 0.5, True

return self.get_state(), 0, False

def check_winner(self, participant):

for i in vary(3):

if all(self.board[i, :] == participant) or all(self.board[:, i] == participant):

return True

if all(np.diag(self.board) == participant) or all(np.diag(np.fliplr(self.board)) == participant):

return True

return False

def render_gui(self):

fig, ax = plt.subplots()

ax.set_xticks([0.5, 1.5], minor=False)

ax.set_yticks([0.5, 1.5], minor=False)

ax.set_xticks([], minor=True)

ax.set_yticks([], minor=True)

ax.set_xlim(-0.5, 2.5)

ax.set_ylim(-0.5, 2.5)

ax.grid(True, which='main', colour='black', linewidth=2)

for i in vary(3):

for j in vary(3):

worth = self.board[i, j]

if worth == 1:

ax.plot(j, 2 - i, 'x', markersize=20, markeredgewidth=2, colour='blue')

elif worth == -1:

circle = plt.Circle((j, 2 - i), 0.3, fill=False, colour='purple', linewidth=2)

ax.add_patch(circle)

ax.set_aspect('equal')

plt.axis('off')

plt.present()2. Program the Q-Studying Agent

Subsequent, we outline the training half: Our agent

It decides which motion to carry out in a sure state to get as a lot reward as doable.

The agent makes use of the traditional RL methodology Q-learning. A Q-value is saved for every mixture of state and motion — the estimated long-term advantage of this motion.

A very powerful strategies are:

- Utilizing the

choose_action(self, state, actions)perform, the agent decides in every recreation state of affairs whether or not to decide on an motion that it already is aware of properly (exploitation) or whether or not to check out a brand new motion that has not but been sufficiently examined (exploration).This determination is predicated on the so-called ε-greedy method:

With a chance of ε = 0.1 the agent chooses a random motion (exploration), with 90 % chance (1 – ε) it chooses the at the moment finest recognized motion primarily based on its Q-table (exploitation).

- With the perform

replace(state, motion, reward, next_state, next_actions)we modify the Q-value relying on how good the motion was and what occurs afterwards. That is the central studying step for the agent.

# Q-Studying-Agent

class QLearningAgent:

def __init__(self, alpha=0.1, gamma=0.9, epsilon=0.1):

self.q_table = defaultdict(float)

self.alpha = alpha

self.gamma = gamma

self.epsilon = epsilon

def get_q(self, state, motion):

return self.q_table[(state, action)]

def choose_action(self, state, actions):

if random.random() < self.epsilon:

return random.selection(actions)

else:

q_values = [self.get_q(state, a) for a in actions]

max_q = max(q_values)

best_actions = [a for a, q in zip(actions, q_values) if q == max_q]

return random.selection(best_actions)

def replace(self, state, motion, reward, next_state, next_actions):

max_q_next = max([self.get_q(next_state, a) for a in next_actions], default=0)

old_value = self.q_table[(state, action)]

new_value = old_value + self.alpha * (reward + self.gamma * max_q_next - old_value)

self.q_table[(state, action)] = new_valueOn my Substack, I recurrently write summaries in regards to the printed articles within the fields of Tech, Python, Data Science, Machine Studying and AI. For those who’re , have a look or subscribe.

3. Prepare the agent

The precise studying course of begins on this step. Throughout coaching, the agent learns by means of trial and error. The agent performs many video games, memorizes which actions have labored properly — and adapts its technique.

Throughout coaching, the agent learns how its actions are rewarded, how its conduct impacts later states and the way higher methods develop in the long run.

- With the perform

practice(agent, episodes=10000)we outline that the agent performs 10,000 video games in opposition to a easy random opponent. In every episode, the agent (participant 1) makes a transfer, adopted by the opponent (participant 2). After every transfer, the agent learns by means ofreplace(). - Each 1000 video games we save what number of wins, attracts and defeats there have been.

- Lastly, we plot the training curve with matplotlib. It exhibits how the agent improves over time.

# Coaching mit Lernkurve

def practice(agent, episodes=10000):

env = TicTacToe()

outcomes = {"win": 0, "draw": 0, "loss": 0}

win_rates = []

draw_rates = []

loss_rates = []

for episode in vary(episodes):

state = env.reset()

accomplished = False

whereas not accomplished:

actions = env.available_actions()

motion = agent.choose_action(state, actions)

next_state, reward, accomplished = env.step(motion, participant=1)

if accomplished:

agent.replace(state, motion, reward, next_state, [])

if reward == 1:

outcomes["win"] += 1

elif reward == 0.5:

outcomes["draw"] += 1

else:

outcomes["loss"] += 1

break

opp_actions = env.available_actions()

opp_action = random.selection(opp_actions)

next_state2, reward2, accomplished = env.step(opp_action, participant=-1)

if accomplished:

agent.replace(state, motion, -1 * reward2, next_state2, [])

if reward2 == 1:

outcomes["loss"] += 1

elif reward2 == 0.5:

outcomes["draw"] += 1

else:

outcomes["win"] += 1

break

next_actions = env.available_actions()

agent.replace(state, motion, reward, next_state2, next_actions)

state = next_state2

if (episode + 1) % 1000 == 0:

complete = sum(outcomes.values())

win_rates.append(outcomes["win"] / complete)

draw_rates.append(outcomes["draw"] / complete)

loss_rates.append(outcomes["loss"] / complete)

print(f"Episode {episode+1}: Wins {outcomes['win']}, Attracts {outcomes['draw']}, Losses {outcomes['loss']}")

outcomes = {"win": 0, "draw": 0, "loss": 0}

x = [i * 1000 for i in range(1, len(win_rates) + 1)]

plt.plot(x, win_rates, label="Win Charge")

plt.plot(x, draw_rates, label="Draw Charge")

plt.plot(x, loss_rates, label="Loss Charge")

plt.xlabel("Episodes")

plt.ylabel("Charge")

plt.title("Lernkurve des Q-Studying-Agenten")

plt.legend()

plt.grid(True)

plt.tight_layout()

plt.present()

4. Visualization of the board

With the principle program “if title == ”predominant“:” we outline the place to begin of this system. It ensures that the coaching of the agent runs robotically once we execute the script. And we use the render_gui() methodology to show the TicTacToe board as a graphic.

# Hauptprogramm

if __name__ == "__main__":

agent = QLearningAgent()

practice(agent, episodes=10000)

# Visualisierung eines Beispielbretts

env = TicTacToe()

env.board[0, 0] = 1

env.board[1, 1] = -1

env.render_gui()Execution within the terminal

We save the code within the file TicTacToeRL.py.

Within the terminal, we now navigate to the corresponding listing the place our TicTacToeRL.py is saved and execute the file with the command “Python TicTacToeRL.py”.

Within the terminal, we will see what number of video games our agent has received after each a thousandth episode:

And within the visualization we see the training curve:

Remaining Ideas

With TicTacToe, we use a easy recreation and a few Python — however we will simply see how Reinforcement Learning works:

- The agent begins with none prior information.

- It develops a technique by means of suggestions and expertise.

- Its selections regularly enhance because of this – not as a result of it is aware of the foundations, however as a result of it learns.

In our instance, the opponent was a random agent. Subsequent, we may see how our Q-learning agent performs in opposition to one other studying agent or in opposition to ourselves.

Reinforcement studying exhibits us that machine intelligence is just not solely created by means of information or data – however by means of expertise, suggestions and adaptation.