The! It was giving me OK solutions after which it simply began hallucinating. We’ve all heard or skilled it.

Pure Language Technology fashions can generally hallucinate, i.e., they begin producing textual content that’s not fairly correct for the immediate supplied. In layman’s phrases, they begin making stuff up that’s not strictly associated to the context given or plainly inaccurate. Some hallucinations could be comprehensible, for instance, mentioning one thing associated however not precisely the subject in query, different occasions it could appear to be professional info however it’s merely not right, it’s made up.

That is clearly an issue after we begin utilizing generative fashions to finish duties and we intend to eat the data they generated to make choices.

The issue just isn’t essentially tied to how the mannequin is producing the textual content, however within the info it’s utilizing to generate a response. When you practice an LLM, the data encoded within the coaching information is crystalized, it turns into a static illustration of all the pieces the mannequin is aware of up till that time limit. To be able to make the mannequin replace its world view or its data base, it must be retrained. Nevertheless, coaching Giant Language Fashions requires money and time.

One of many foremost motivations for creating RAG s the rising demand for factually correct, contextually related, and up-to-date generated content material.[1]

When fascinated with a strategy to make generative fashions conscious of the wealth of latest info that’s created on a regular basis, researchers began exploring environment friendly methods to maintain these models-up-to-date that didn’t require repeatedly re-training fashions.

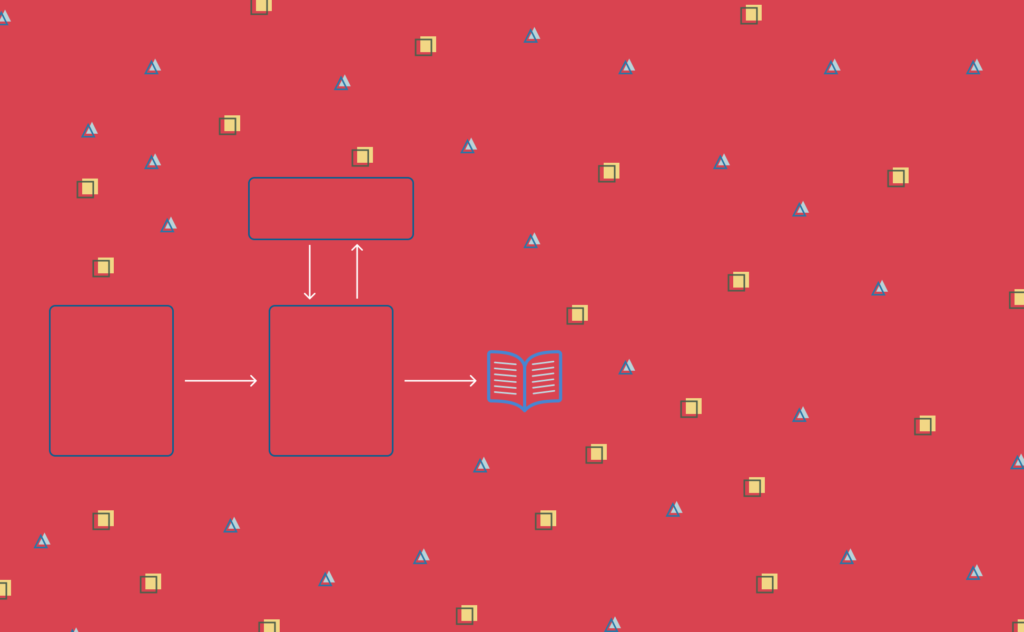

They got here up with the thought for Hybrid Fashions, which means, generative fashions which have a manner of fetching exterior info that may complement the info the LLM already is aware of and was educated on. These modela have a info retrieval element that permits the mannequin to entry up-to-date information, and the generative capabilities they’re already well-known for. The aim being to make sure each fluency and factual correctness when producing textual content.

This hybrid mannequin structure is named Retrieval Augmented Technology, or RAG for brief.

The RAG period

Given the important have to maintain fashions up to date in a time and value efficient manner, RAG has grow to be an more and more common structure.

Its retrieval mechanism pulls info from exterior sources that aren’t encoded within the LLM. For instance, you may see RAG in motion, in the actual world, while you ask Gemini one thing in regards to the Brooklyn Bridge. On the backside you’ll see the exterior sources the place it pulled info from.

By grounding the ultimate output on info obtained from the retrieval module, the result of those Generative AI functions, is much less prone to propagate any biases originating from the outdated, point-in-time view of the coaching information they used.

The second piece of the Rag Architecture is what’s the most seen to us, shoppers, the technology mannequin. That is usually an LLM that processes the data retrieved and generates human-like textual content.

RAG combines retrieval mechanisms with generative language fashions to reinforce the accuracy of outputs[1]

As for its inside structure, the retrieval module, depends on dense vectors to establish the related paperwork to make use of, whereas the generative mannequin, makes use of the everyday LLM structure based mostly on transformers.

This structure addresses essential pain-points of generative fashions, however it’s not a silver bullet. It additionally comes with some challenges and limitations.

The Retrieval module could battle in getting essentially the most up-to-date paperwork.

This a part of the structure depends closely on Dense Passage Retrieval (DPR)[2, 3]. In comparison with different methods resembling BM25, which relies on TF-IDF, DPR does a significantly better job at discovering the semantic similarity between question and paperwork. It leverages semantic which means, as a substitute of straightforward key phrase matching is very helpful in open-domain functions, i.e., take into consideration instruments like Gemini or ChatGPT, which aren’t essentially consultants in a selected area, however know just a little bit about all the pieces.

Nevertheless, DPR has its shortcomings too. The dense vector illustration can result in irrelevant or off-topic paperwork being retrieved. DPR fashions appear to retrieve info based mostly on data that already exists inside their parameters, i.e, info have to be already encoded in an effort to be accessible by retrieval[2].

[…] if we prolong our definition of retrieval to additionally embody the flexibility to navigate and elucidate ideas beforehand unknown or unencountered by the mannequin—a capability akin to how people analysis and retrieve info—our findings suggest that DPR fashions fall in need of this mark.[2]

To mitigate these challenges, researchers thought of including extra subtle question enlargement and contextual disambiguation. Question enlargement is a set of methods that modify the unique consumer question by including related phrases, with the aim of building a connection between the intent of the consumer’s question with related paperwork[4].

There are additionally circumstances when the generative module fails to totally consider, into its responses, the data gathered within the retrieval section. To deal with this, there have been new enhancements on consideration and hierarchical fusion methods [5].

Mannequin efficiency is a vital metric, particularly when the aim of those functions is to seamlessly be a part of our day-to-day lives, and take advantage of mundane duties virtually easy. Nevertheless, operating RAG end-to-end could be computationally costly. For each question the consumer makes, there must be one step for info retrieval, and one other for textual content technology. That is the place new methods, resembling Mannequin Pruning [6] and Data Distillation [7] come into play, to make sure that even with the extra step of looking for up-to-date info outdoors of the educated mannequin information, the general system remains to be performant.

Lastly, whereas the data retrieval module within the RAG structure is meant to mitigate bias by accessing exterior sources which can be extra up-to-date than the info the mannequin was educated on, it could really not absolutely get rid of bias. If the exterior sources should not meticulously chosen, they will proceed so as to add bias and even amplify present biases from the coaching information.

Conclusion

Using RAG in generative functions offers a big enchancment on the mannequin’s capability to remain up-to-date, and provides its customers extra correct outcomes.

When utilized in domain-specific functions, its potential is even clearer. With a narrower scope and an exterior library of paperwork pertaining solely to a selected area, these fashions have the flexibility to do a simpler retrieval of latest info.

Nevertheless, making certain generative fashions are continually up-to-date is much from a solved drawback.

Technical challenges, resembling, dealing with unstructured information or making certain mannequin efficiency, proceed to be lively analysis matters.

Hope you loved studying a bit extra about RAG, and the function this kind of structure performs in making generative functions keep up-to-date with out requiring to retrain the mannequin.

Thanks for studying!

- A Complete Survey of Retrieval-Augmented Technology (RAG): Evolution, Present Panorama and Future Instructions. (2024). Shailja Gupta and Rajesh Ranjan and Surya Narayan Singh. (ArXiv)

- Retrieval-Augmented Technology: Is Dense Passage Retrieval Retrieving. (2024). Benjamin Reichman and Larry Heck— (link)

- Karpukhin, V., Oguz, B., Min, S., Lewis, P., Wu, L., Edunov, S., Chen, D. & Yih, W. T. (2020). Dense passage retrieval for open-domain query answering. In Proceedings of the 2020 Convention on Empirical Strategies in Pure Language Processing (EMNLP) (pp. 6769-6781).(Arxiv)

- Hamin Koo and Minseon Kim and Sung Ju Hwang. (2024).Optimizing Question Technology for Enhanced Doc Retrieval in RAG. (Arxiv)

- Izacard, G., & Grave, E. (2021). Leveraging passage retrieval with generative fashions for open area query answering. In Proceedings of the sixteenth Convention of the European Chapter of the Affiliation for Computational Linguistics: Fundamental Quantity (pp. 874-880). (Arxiv)

- Han, S., Pool, J., Tran, J., & Dally, W. J. (2015). Studying each weights and connections for environment friendly neural community. In Advances in Neural Info Processing Programs (pp. 1135-1143). (Arxiv)

- Sanh, V., Debut, L., Chaumond, J., & Wolf, T. (2019). DistilBERT, a distilled model of BERT: Smaller, quicker, cheaper and lighter. ArXiv. /abs/1910.01108 (Arxiv)